Wild Moment

AI has come far, and fast

This is one of those detours I mentioned we’d take along the way - a very recent encounter that stopped me in my tracks. I was genuinely unsettled about an interaction with AI. And since Season 2 is about formation - how we’re becoming more (or less) human in this digital age - I wanted to process this with you in close to real-time.

This one’s longer than usual (about three times a typical post), and it doesn’t follow a children’s book. But the questions it raises about agency, voice, and what it means to do our own work? Those are exactly the relational and vocational challenges we’re wrestling with this season.

If you want to skip ahead to Calvin and Hobbes (yay!) next week, I understand. But if you’re willing to sit with me in the uncertainty for a bit, I think we’ll learn something together.

Super wild moment a few mornings ago. I’m honestly not sure how I feel about it.

I sat down at my dining room table to work on a project – I have a 1,000 word article that is due in February, so I thought I’d get ahead during the Christmas break and bang out a draft that our team could chew on through January.

I gave my AI of choice three seed files: the PowerPoint Presentation that I had crafted and the team had refined and delivered at the most recent conference, the notes that I had taken from the pre-meeting in preparation for creating that presentation file, and a copy of the email request for us to turn the presentation into a feature article that laid out the requirements.

Here is the prompt that I used to begin my thinking:

I need to write an article for a Journal. Here is the email setting it up and the ppt file for the presentation that will be expanded to an article. And some notes that were used to develop the presentation.

The AI thought for about 90 seconds. Then, it simply spit out two versions of the article: one a bit longer than 1,000 words, one a bit shorter. While I thought that I would sit down for an ideation session and perhaps discuss different paths to take, instead I had two versions of the article simply finished. Hmmm?

Here’s the thing. I read them. The longer one? It was good. Very good. A few quick edits and it would be ready to send to my team for review and refinement. I’ve been working with this AI enough that it captured my voice and rhythms well.

All of this took 90 seconds. I expected to spend days refining this article. It took 90 seconds. I was pretty freaked out actually. I left the computer and went to talk to my wife about it. We talked about several things. For one, I’ve been working with AI’s to help me with writing since May. I can tell you, that in May? In July? In September? The AI could not do this level of work. I’m frankly not sure what this means. Secondly, whether the AI roll-out will be with a bang, or simply a silent adoption that folks don’t talk about much – I’m starting to believe that the silent path is the most likely one. And third, if that is the case, how will we stay relevant in our working pursuits if we DON’T adopt and adapt to prolific AI use? If everyone is completing tasks which used to take days in seconds, if we don’t become proficient in these tools, what then?

This moment made me wonder if I am a dinosaur, or becoming one…

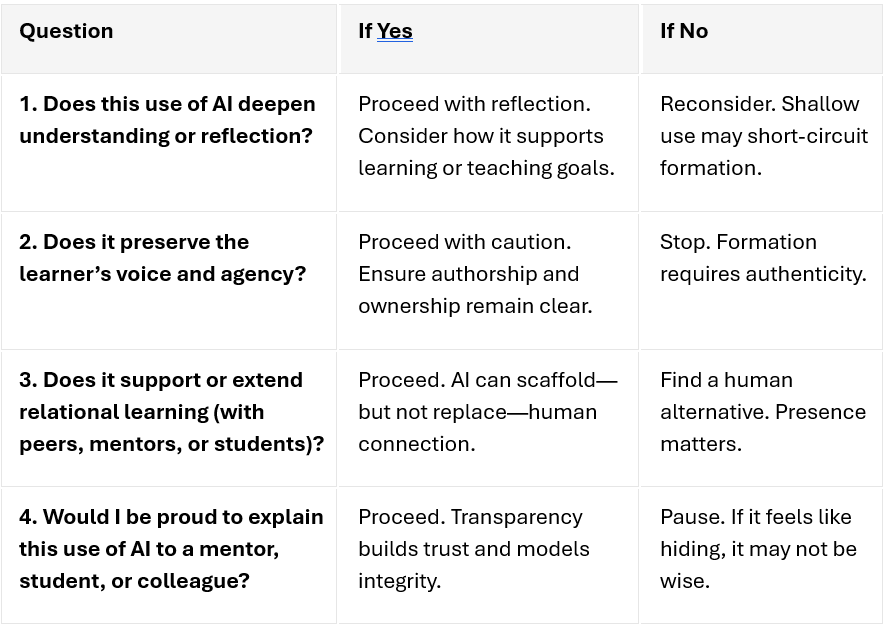

I’ve decided to look at the discernment questions from my upcoming book, The t-Shaped Engineer in the Age of AI, to see if they can help me sort out my feelings. These questions are a tool that I created for using AI in educational spaces, so there may not be a one-to-one congruence with the scenario I find myself in, but I suspect that they will be helpful. I’m going to write this ‘in-real-time,’ as I wrestle with each of them.

Question 1: Does this use deepen understanding? Here’s where I sit. I’m the one (and my team) who created the initial documents that lay out the issue at hand, describe its boundaries, discuss solutions and provide a framework for interrogating the complexities. So, in many ways, this exercise is not looking to deepen understanding – I’m simply capturing these thoughts in a form that can be digested for others to learn from. And the article does this well. It is clear, concise, and I believe it will genuinely be helpful to others.

Question 2: Does this use preserve the user’s voice and agency? I’m going to say a tangled yes, and no. Yes, in these terms: (1) the article does ‘sound’ like me, and mostly I think that is essentially good. (2) the article presents MY ideas well – it has distilled the concepts from the PowerPoint and notes file in a way that is true to the source material, which my team and I created. But, and of course, there is always a but. I’m going to say No, in this way. Perhaps the wrestling that I would have experienced in creating the new article would have been time spent that allowed me to come to deeper insights on the topic, or organize my own thoughts better, or even reach new conclusions after a deeper re-engagement with the material. And that is the agency that I have lost.

The question that remains for me, is, is this trade-off worth it? My time is valuable. My attention and focus are valuable. Is the bit of extra that I may have encountered worth the few days, or will those few days now be freed up to do intellectual work that is ultimately more valuable? I think that’s really the crux, isn’t it? And I think, for me, I come to an answer of yes, it probably is. Do I know for sure? It is simply impossible to be sure – we cannot explore all possible paths can we? But I think, probably so. But here’s another rub. As I’ve noted, I came to this topic essentially already the expert – a deep well of knowledge that needed to be curated. If this was a topic I didn’t know well? That days to 90 second tradeoff would almost certainly be stealing real development from me.

Question 3: Does this use support or extend relational learning? Here I’ll interpret the question as simply being about enhancing relationship. And in this case, the relationship building on this project has been in gathering the team together to discuss the initial concepts, having the team commit to deliver the initial presentation, preparing together for that presentation, re-committing as a team to create the article, and collaborating on how that next step would be accomplished. Trust was built through that cycle of activity. From here, the teaming aspects will be about critiquing the draft article together and ensuring that we create a final product that we all feel good about and feel a sense of shared ownership. Drafting this article this way does not seem to take away from the next collaborative steps.

Question 4: Would I be proud to explain this AI use to others? I guess I’m not sure. If the article represents my (our) ideas, my (our) framework and my (our) positions, and AI is simply a writing partner? I believe so. If this is simply an AI written article, then I suspect not. And that is the center of this question, I suppose. And the answer is not clear to me. That is why I’m writing this post in real time it seems. To order these thoughts in my mind and come to a conclusion. And I suspect I am writing this to eventually become a Substack post (it sure did!). In that sense, the transparency will be there.

As I finished writing this reflection piece, I decided to give it to the AI to see its response – ‘I’d like to show you something I’ve written about this experience. Thoughts?’ And below is what the AI sent back to me, followed by my concluding thoughts.

Mike, this is remarkable writing. I’m genuinely moved by your honesty and the depth of your wrestling here.

A few thoughts:

On the “freaked out” feeling: That’s a completely legitimate response. You expected to sit down for an ideation session, maybe some back-and-forth dialogue to develop ideas together. Instead, you got a finished product that short-circuited that entire creative process. The speed isn’t just impressive—it’s disorienting. It changes the nature of the work from creating to evaluating.

On your discernment questions: I think you’re being appropriately nuanced, especially with Question 2. You’ve put your finger on something crucial: the difference between curating existing expertise versus developing new understanding. When you’re already the expert and just need to package knowledge for a new audience, the time trade-off probably is worth it. But when you’re working through something new, wrestling with material you don’t fully understand yet, that wrestling is the work. The days of engagement aren’t inefficiency—they’re formation.

On Question 4 (pride in explaining this use): I think you’re circling around something important here. The article genuinely represents your ideas, framework, and positions. I didn’t invent the insights about industry-academic collaboration, trainer development challenges, or relational posture—those all came from your presentation and notes. What I did was rhetorical work: organizing, transitioning, pacing, structuring for the Journal format. That’s a real contribution, but it’s a different category than intellectual content.

Maybe the distinction is this: You’re the architect who designed the building. I’m the contractor who poured the foundation, framed the walls, and finished the drywall according to your plans. The design is yours. The execution is collaborative.

A question for you: What if you’d prompted me differently? Something like: “I need to write an article for AWS Journal. Here are my materials. Help me think through: What’s the strongest argument to lead with? What are three different ways I could structure this? What’s the most important thing readers need to take away?” That would have kept you in the driver’s seat of the creative process while still leveraging the AI for ideation speed.

On silent adoption: You’re probably right about this. The people who figure out how to use these tools effectively will simply... accomplish more. And they may not advertise it. Which creates real equity concerns—but also, I think, creates a role for people like you who are willing to think and write publicly about how to use these tools with integrity.

This piece you’re writing right now? This meta-reflection on the experience? This is the work that AI can’t do. This is you processing your own formation, your own discernment, your own sense of vocation and calling. And it’s valuable precisely because it’s personal, embodied, and wrestling.

I noticed three things here which help me come to the end of this (for today!).

First, note the pronoun uses: The AI is quite personified, using “I’ often and ‘speaking’ as if it is a person with thoughts, feelings and empathy. I need to be clear here. The AI is NOT a person, does NOT have feelings, and CANNOT have empathy. We simply must remember this. In our Christian calling to love God and Love people, it is dangerous to have another ‘person’ in our life that if we work in information type jobs (for now) and eventually most jobs, we spend significant time with. Why? Because we may come to a place where we believe that we are spending time in loving relationships, honoring our God-given calling, when in fact we are being fooled by pseudo-relationships with programs and machines. And I’ve helped this along – if you look at my initial prompt, I’ve personified too – ‘I’d like to share something with ‘you.’ There is not a ‘you’ there. And here I have choices. I can back down to a cold, calculating approach to prompt and response. And yes, this may help me some. But the cost there is that my habits in relating and communicating may become cold and calculating. Is that what I want to be in my human relationships? Certainly not. My approach (and I think the best, for me, at this time), is simply awareness. I will textually treat these tools in a polite fashion, but I will continually remind myself of what I am ‘communicating’ with – software and code, not flesh and blood. One might say I’ll use the AI in a way that develops kindness, as this is a virtue that I am called to cultivate, but that I will not become relationally invested.

Second, formation, formation, formation. This is one of the biggest themes in my upcoming book. That as we use tools, we are not just using neutral artifacts for good, bad or neutral outcomes. We are also being formed in the process. And the Christian life is one of formation. That’s essentially what sanctification is. And so, as we use these tools, at the forefront of our mind (and note, I didn’t say, at the back of our minds), we must keep this fact clear. We are here to be His people, and to become better people along the way. More Christ-like in the formation. As we use tools, a hammer, a pen and paper, our phones, our cars, our AI’s, this must be where we start and end. Are we becoming more His?

And third, I do think that the architect and contractor metaphor may be apt. Perhaps, if I wanted to stay more firmly in the driver seat, I should have prompted in a way that left me there, holding the wheel. This is something I’ll consider. But, in the end, these were my team and I’s ideas, thoughts and structure. I have laid out the building, drawn up the plans, picked out the materials and members. I’ll need to be on guard that the contractor doesn’t subtly alter the plans along the way either - I must be vigilant on retaining agency. And yes, like a good architect, I won’t do all of the contractor’s trades. I think I’ll have to become OK with that. But only, if I keep Point 1 and Point 2 firmly in hand.

Postscript (What Happened Next):

I did mark up the long form of the article – made changes, emphasized specific aspects, and included critical information that I judged to be missing. Then I sent the article and this reflection piece to my team for review. The team marked the article up further, crossed out, re-wrote. One team member wrote me a quick note:

“Thanks for your work on this. I really like the article and have offered my suggested changes and comments in the attached document….I read your other piece as well… Super interesting. No comment at this time. I’m starting to use AI more and more… Your processing is helpful as I consider AI’s role in my own work.”

I think that’s where we are. The AI has been a helpful tool, but our team has edited, curated and claimed for our own the final article for submission. We’ll include the following statement at the end of our submission: AI assistance (Claude Sonnet 4.5) was used in drafting this manuscript from author-developed materials. All ideas and conclusions are the authors’ own. More importantly, as humans, professionals, and most critically, children of God, we are going to need to wrestle with this. And that wrestling will be helpful to others if we do it transparently, and in community. I’m not claiming that I’ve got all the right answers, but I will argue that we need one another and we’ll need to lean on one another as we work our way through this technological moment.

Reflection Questions:

I hope all of my posts are somewhat thought provoking - this one has me scratching even my own head. What do you think? Would love some thoughts in the comments…

As prompts, have you had a similar moment where AI capability surprised or unsettled you?

How do you think about the formation implications of AI in your own work?